Two glass boxes

In B2B AI, the customer is a builder. That changes what the product is.

In B2B AI, there are two users:

The customer configuring the system.

The end-user asking a question and getting a resolution.

It’s tempting to collapse these groups together when designing, but the first user, the customer-builder, needs different evidence to understand and trust the system they are helping to create.

Most AI systems are black boxes by default: inputs go in, answers come out, and the system in between is hard to inspect. A lot of trust work focuses on making the answer more transparent – with citations, visible sources, input context, status signals, and clear admittances of uncertainty. That matters. But it mostly serves the end-user.

Customer-builders need visibility one layer deeper: “Why did this happen, and what can I do about it?”

Consequently, you must build two glass boxes.

When there’s only one visible thing, that’s what gets blamed

In AI products, the final end-user answer is what both the customer-builder and end-user see and judge. But a bad answer can come from many places: missing content or knowledge, incorrect config, e.g., guidance or guardrails, or the model itself. If those layers are invisible, every failure collapses into the same explanation: “the AI is broken.”

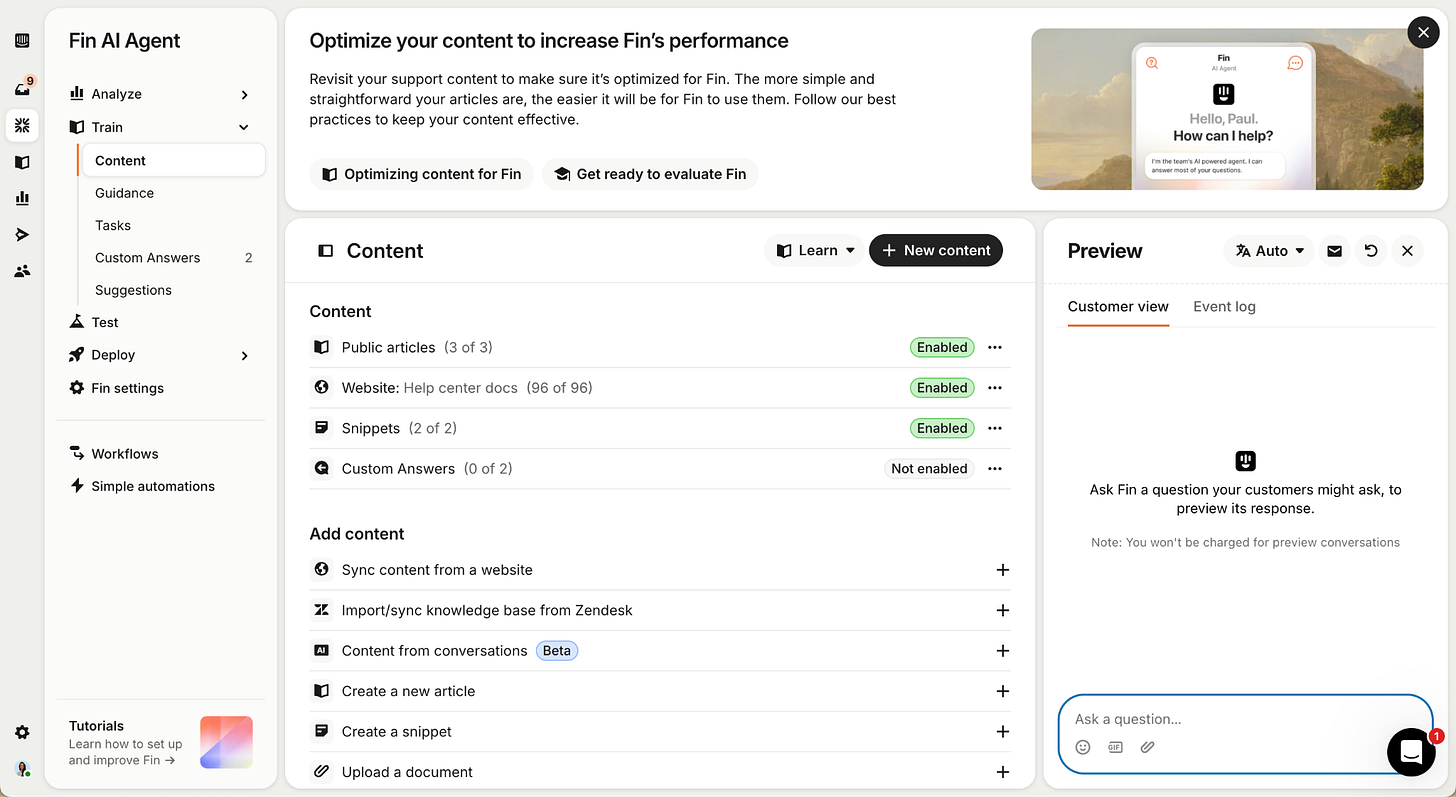

Here’s a story from the trenches back when I was on the Fin Content team. A prospective customer-builder was trying out Fin for the first time. As part of the setup, they entered their website URL into the content page and saw hundreds of pages come back as “enabled.”

What the content page looked like before. No visibility, simply “enabled” / “not enabled”. That didn’t communicate the nuance of website imports, which are the most popular type for new customers trying out Fin for the first time.

To them, that meant the system was ready, so they used the Preview panel to test Fin. They asked a product question they already knew the answer to and Fin got it wrong. From the outside, it looked like a hallucination, since the correct answer was on their site inside an FAQ accordion.

The actual cause was upstream: the page had been detected, but the content inside the FAQ accordion’s collapsed section hadn’t been successfully read, so the answer never made it into Fin’s knowledge base and it responded with incomplete information.

Two trust failures in the same moment, for two different parties. Had this reached an end-user, they would have seen a wrong answer with no signal it was wrong. The customer-builder almost concluded “Fin is not good” because the import surface said “synced” and gave them nowhere else to look.

Fin doesn’t usually fail in isolation, so the fix is rarely at the model layer. Most issues are caused during set-up – wrong content, configuration, or guidance. So the product team must build a glass box around these processes.

In this instance, that means allowing the customer-builder to see what has been read, what was excluded, what failed, and why. Otherwise, “Fin is broken” is the only conclusion the customer can draw, because Fin is the only part of the system they can see.

What goes under each glass box

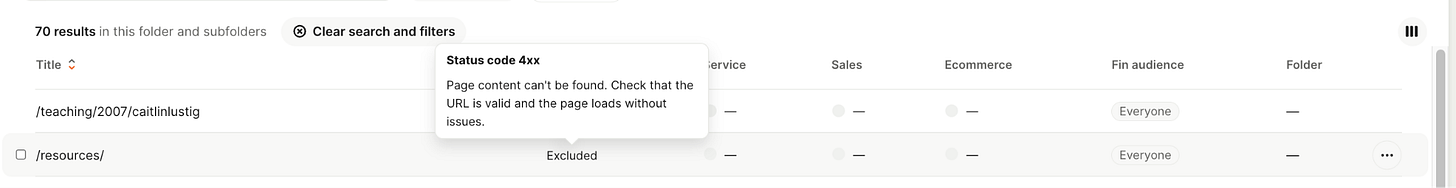

When we rebuilt the content import experience to expose failure modes and enable troubleshooting, we built a glass box for that part of the experience.

Glass boxes build trust. But “show everything” isn’t a strategy. Revealing too much creates noise and false confidence. Too little leaves people guessing. So the real work is judgement: what goes in the black box, what goes under glass box one for the customer-builder, what goes under glass box two for the end-user.

The principle I keep coming back to is one I borrowed from data science. Early in my DS career I used to put two decimal places on high-level trend reports for product marketing because it felt more rigorous. Actually… for product marketing, what mattered was the trend. The decimals were noise dressed as precision. The lesson learned: detail to the extent it’s actionable for the audience. Anything beyond that is distraction, regardless of how true it is.

The same principle applies to glass boxes, with one twist: actionability isn’t the same for everyone. We must ask: what can each audience do with what they’re seeing?

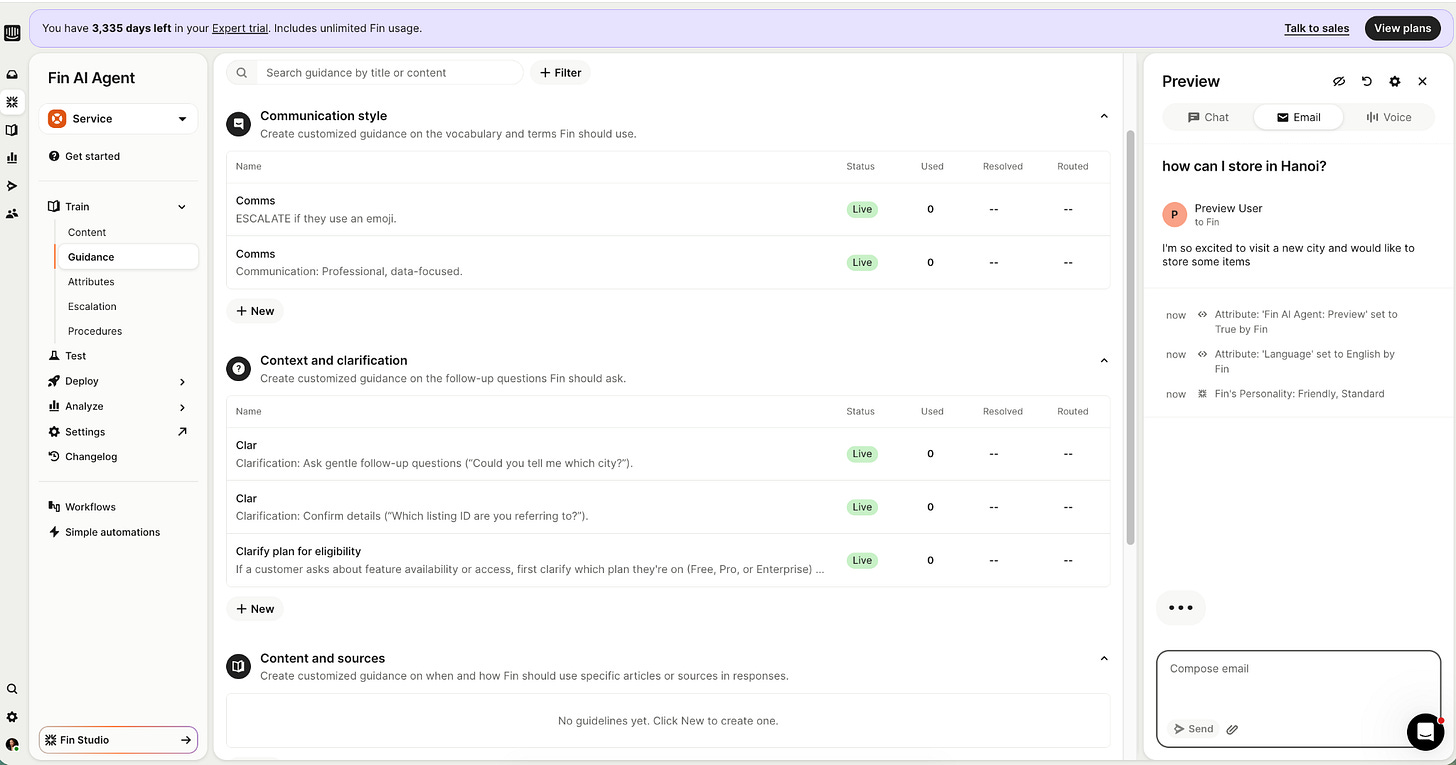

Glass box one: the customer-builder. Their action is “iterate the configuration,” so the surface shows what’s accessible to Fin and what’s configurable; what content was available and retrieved; what guidance shaped the response; what task is being executed. Anything they can change, they need to see. Anything they can’t act on stays out.

Glass box two: the end-user. Their action is “decide whether their query is resolved,” so the surface shows what helps them make that decision: the answer and sources that they can verify, should they want to. Anything beyond it is two-decimal-places of noise.

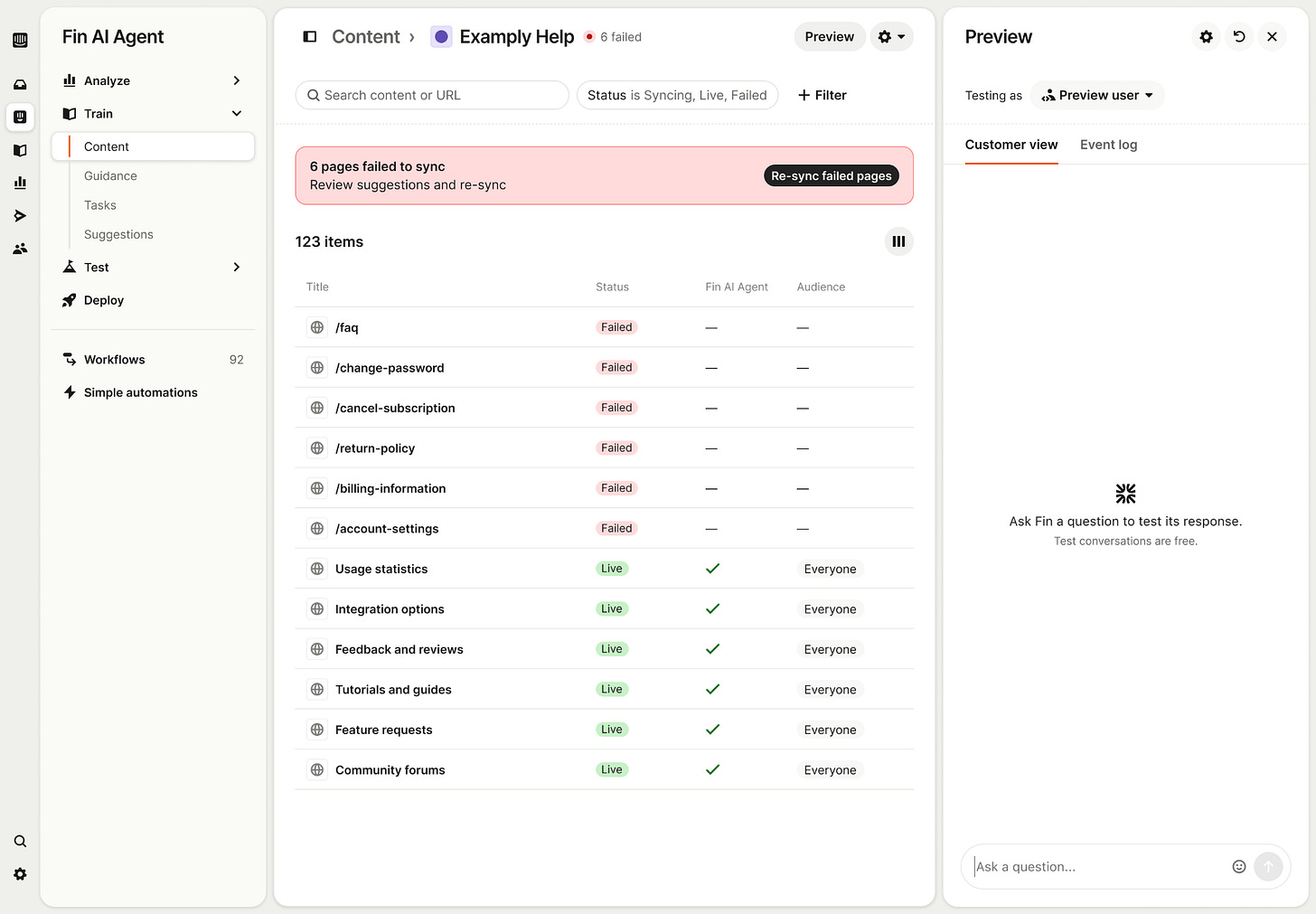

A small example: one team thought Fin had become inconsistent because PDF source links appeared in some answers and not others. Initially, the model was deemed flaky, but the real cause was a guidance rule that needed iteration. The team could see and fix it because the Preview tool showed the one configuration choice that was producing this behaviour.

The harder version of this judgment is the one that goes the other way.

Sometimes the right call is to leave detail out because it confuses more than it helps. The website import surface is an example we lived through. Underneath it, website imports can fail for a long list of low-level technical reasons: different kinds of network issues, blocking, parsing problems, etc. We could have surfaced every one but chose not to, as most customers can’t act on the raw error type even when they see it. We collapsed the long tail into a small number of high-level categories, each tied to a fix the customer could actually make. The glass box got smaller.

Remember: visibility isn’t a virtue. It’s a tool, and like any tool, it can make things worse when it’s the wrong size for the job.

Once you accept ‘detail to the extent it’s actionable,’ how and where you present it matters just as much

A glass box is only useful if it sits next to the thing it’s about. Configuration on one page and logs on another means the customer has to reconstruct context every time they look. Where possible, don’t make them travel or hold things in working memory to find the evidence for a decision they’re making right now.

Example 1: the Content page after. Beyond “enabled”, the page tells you what failed, what’s live, and once the fixes are addressed, the user can take an action to re-sync.

Example 2: the Preview panel. It sits inside the same pages where Fin is configured. It works as a glass box in two ways: it lets customers test a new configuration in the place they just set it, and it surfaces every event Fin was configured around so the customer can trace the root cause of an answer.

Beyond Fin

If you’re developing AI for builders – people who configure it for their own end-users – you are building two glass boxes at once. One you control end-to-end, and one your customer assembles in your interface.

The second one is the part most teams underbuild. It’s also the part that decides whether your customer can trust your product, and confidently put their name on it.