Fill in the blanks

Left to its own devices, AI will push you to the middle

AI will always fill in the gaps. Whatever you don’t specify, it decides – toward the average of everything it’s trained on. A generic purple gradient or repetitive sentence structure (hello ‘it’s not X, it’s Y’) or corners that always seem slightly-too-rounded.

The interface encourages short, simple input. Most AI tools have a prompt bar only about three lines tall. And while that level of detail might work for quick fixes, it will leave much to be desired when you’re building something more meaningful.

As Michelle says: “Every blank you leave is a decision you’ve handed over.”

When it comes to getting worthwhile performance out of your Agents or AI systems you need diligence upfront. For us, that looks like providing Claude with 300 detailed skills, hooks that enforce how it works inside our codebase, and read-only access to production data.

The goal is simple: the less Claude has to guess, the better the output.

This week, we recap stories from across our team on how they collaborate with AI. Including: the workflows and org structures that deliver best results, the experiments they’re learning from, and how they’re feeling about the changing nature of work.

When you don’t have the words

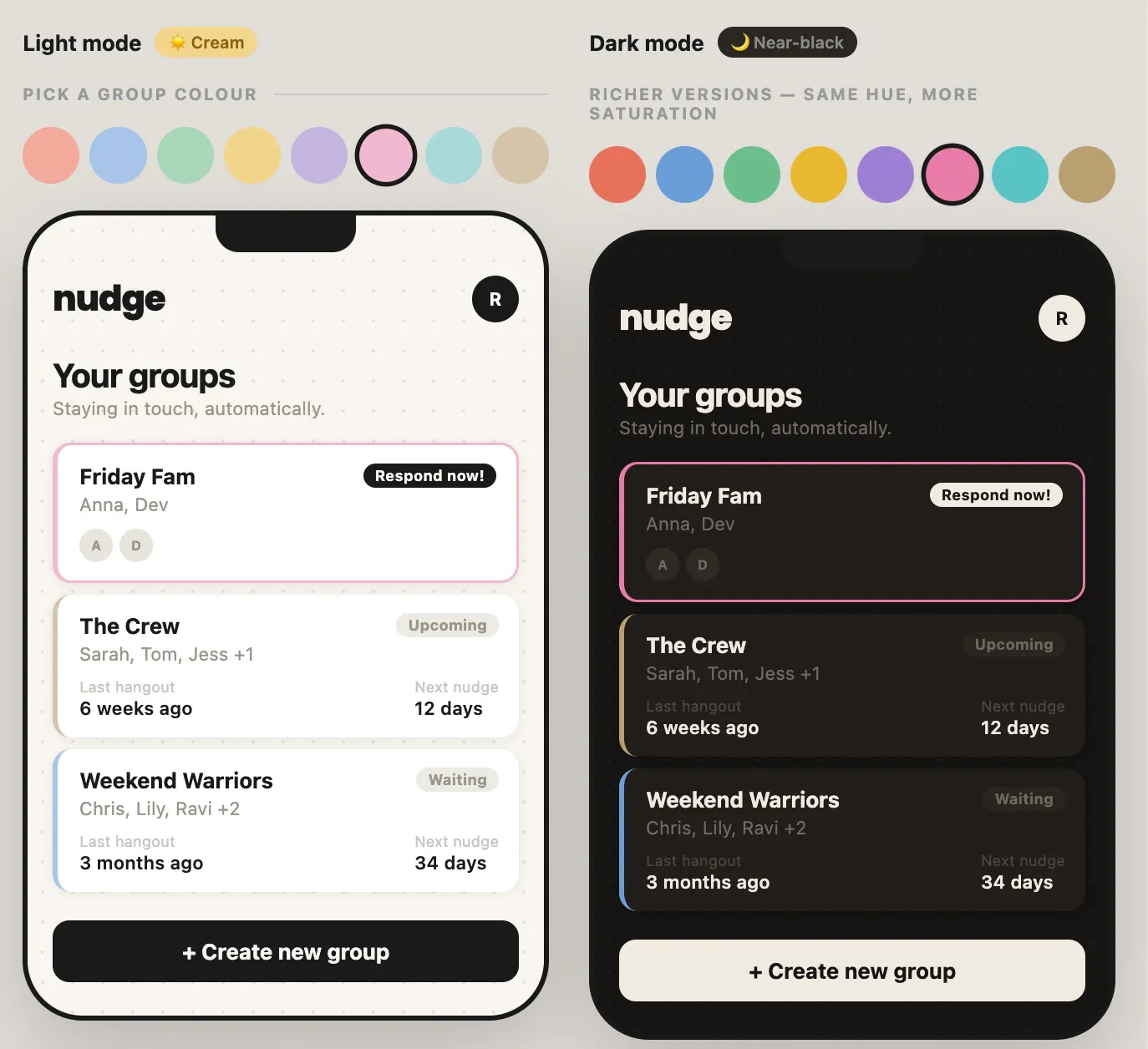

Over the past few months, Róisín has been building Nudge – an app that helps busy friends stay in touch.

The frontend came easily. She’s a designer; wireframes are her bread and butter and Claude turned these into working prototypes. With her experience, she could identify an issue: “This button is too far from that input” or “The error message doesn’t explain what went wrong.” And then she and Claude would debate the best solution and action it.

The backend is a different story.

Claude had to take the lead.

“The terminology alone was like Klingon to me.”

Róisín started every session by reminding Claude to do everything in the browser rather than the terminal and that she was a complete beginner. It walked her through procedures step-by-step, explaining as it went, and worked hard to detangle screenshots of console errors. Over time, she’s learned what error codes mean and how to debug simple things on her own.

But Claude can only do so much.

Nudge’s pending invite status kept throwing 400s and after debugging it three times, Claude suggested cutting it. The feature wasn’t essential for beta testing and it was delaying the valuable insights she’d gain from shipping to users. It was the right call.

Learning what to cut, when to stop iterating, and what’s good enough is something Róisín is learning, and in her own words: “You learn it by shipping.”

Read more about Róisín and Nudge here.

Three lines isn’t a brief

Most coding Agents have a mode called “dangerously accept all permissions.” Michelle argues the bigger risk is the posture most people bring to AI: dangerously accept all opinions.

AI presents recommendations confidently, making you feel like it knows best. It doesn’t – it knows the average of its training data. In most cases, that’s not enough.

Her piece walks you through three practical tools for building the muscle of better direction:

Plan mode: Separates thinking from doing. Map out intent before anything gets built, then push beyond the build plan to the product plan: what should this feel like, what would make someone use it a second time?

Skills: Small bundles of instructions that give AI better judgment in specific areas. Worth bookmarking: Superpowers for brainstorming, Impeccable’s Shape skill for structured discovery interviews, Get Shit Done for spec-driven planning.

Living docs: Brief.md, design.md, architecture.md saved in your project folder. The AI references them every session. Your prompts get lighter because it already knows where you’re coming from.

According to Michelle: “The building is the easy part. The hard part is knowing what to build and being detailed and opinionated enough about it so what gets built is actually yours.”

Get all of Michelle’s tips here.

The treadmill that punishes mastery

With the rise of AI tools, designers are now being asked to run two scorecards simultaneously – technical fluency and craft – and it’s a tough balancing act. Worse still, according to John, the demands on each are ramping up.

As the AI space gets more competitive, new tools and models come to market almost everyday, making it hard to achieve true mastery. The external pressure doesn’t help. The AI corner of design Twitter and every internal Slack channel runs on a constant drip of “look what I shipped this weekend.” Any designer who pauses to think feels like they’re falling behind.

“At the same time, you’re still expected to be a world-class designer. Now that anyone can ship, the bar is only going up – for craft, for taste, for the thousand small decisions that make products feel great.”

John fears the designers attempting to excel in both disciplines risk running themselves into the ground. But he also thinks a reprieve could be on the horizon: “There’s probably a correction of some sort coming. The pace isn’t sustainable.”

In the end, “the designers who come out of this strongest won’t be the ones who ran hardest on either axis. They’ll be the ones who stayed clear-headed enough, and rested enough, to do the judgement work that compounds.”

Read his full piece here.

Exit the comfortable middle

Karen leads a 40-person Research and Data Science (RAD) organization at Intercom. Her conclusion from the last few months of working with AI isn’t what you might expect.

“AI commoditises execution. It does not commoditise judgment. And for research and data science teams, that’s the difference between being relevant and being replaced.”

The work most at risk to AI automation, she argues, isn’t the obvious tactical work. But the comfortable middle – the incremental analysis that feels strategic, a dashboard update that is visible but never changes a decision, or simple research exercises. All of this can now be handled by stakeholders with the help of AI.

So how can researchers and data scientists maintain their relevancy?

According to Karen: they can move in three directions:

Down: Encode business context as infrastructure to make self-serve trustworthy.

Up: Move from delivering insights to building decision systems.

Back: Lean into the human edge, the slow, formative work that doesn’t exist in any dataset yet.

Read her full analysis here.

Write it all down

We’ve written a lot about our 2x initiative but no one has spoken about it more than Brian.

Last week, he sat down with Akash Bajwa to talk through what building AI-first looks like inside a 15-year-old Rails monolith.

Getting Claude to perform reliably within such a code base meant building a harness around it – with linters, standardised patterns, and explicit written guidance about the right way to do things. Brian actually thinks the process “mirrors human onboarding”: you show Claude what good looks like, which parts of the code represent the old way, and how to get things done. The difference is you have to write it all down.

How is the harness working?

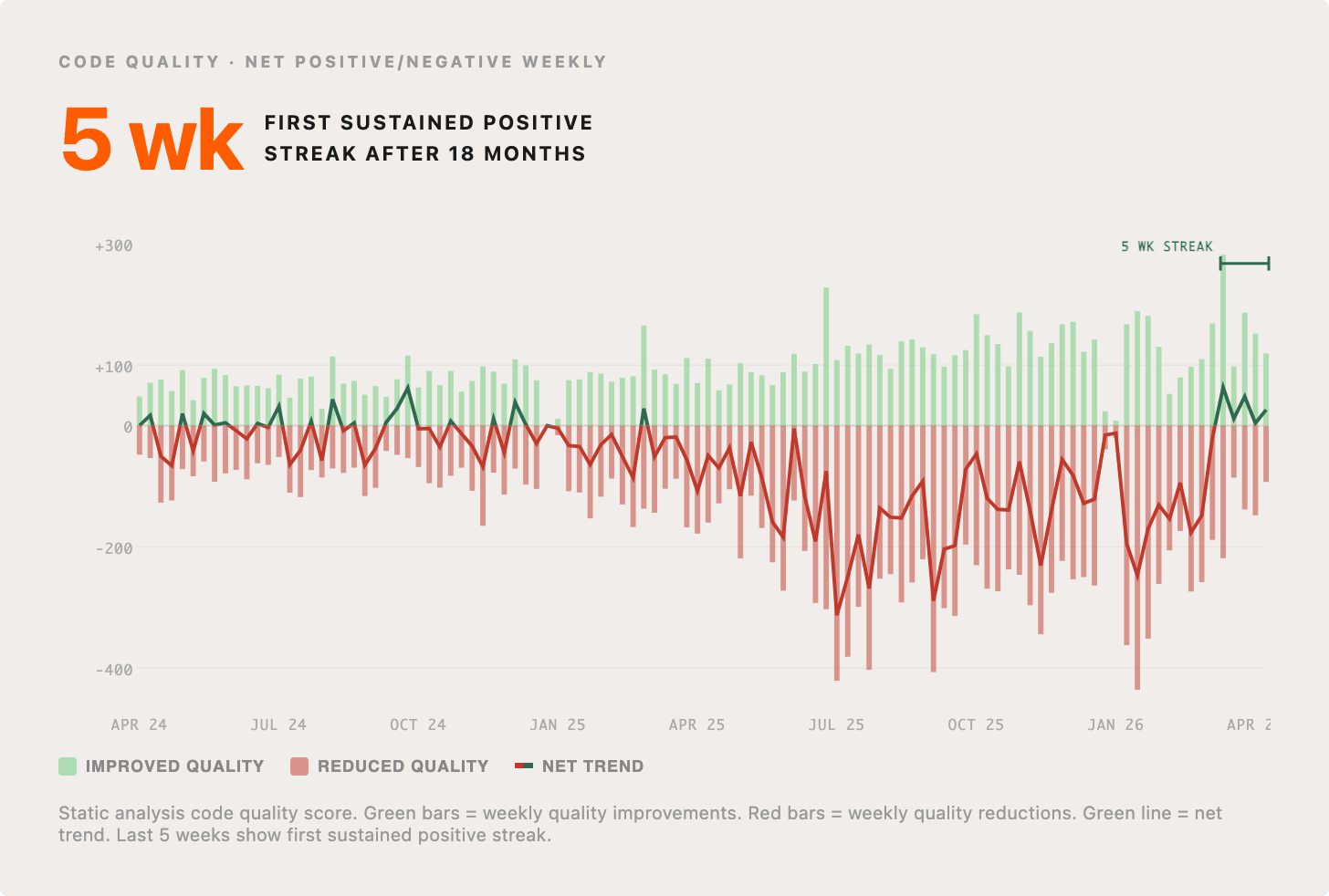

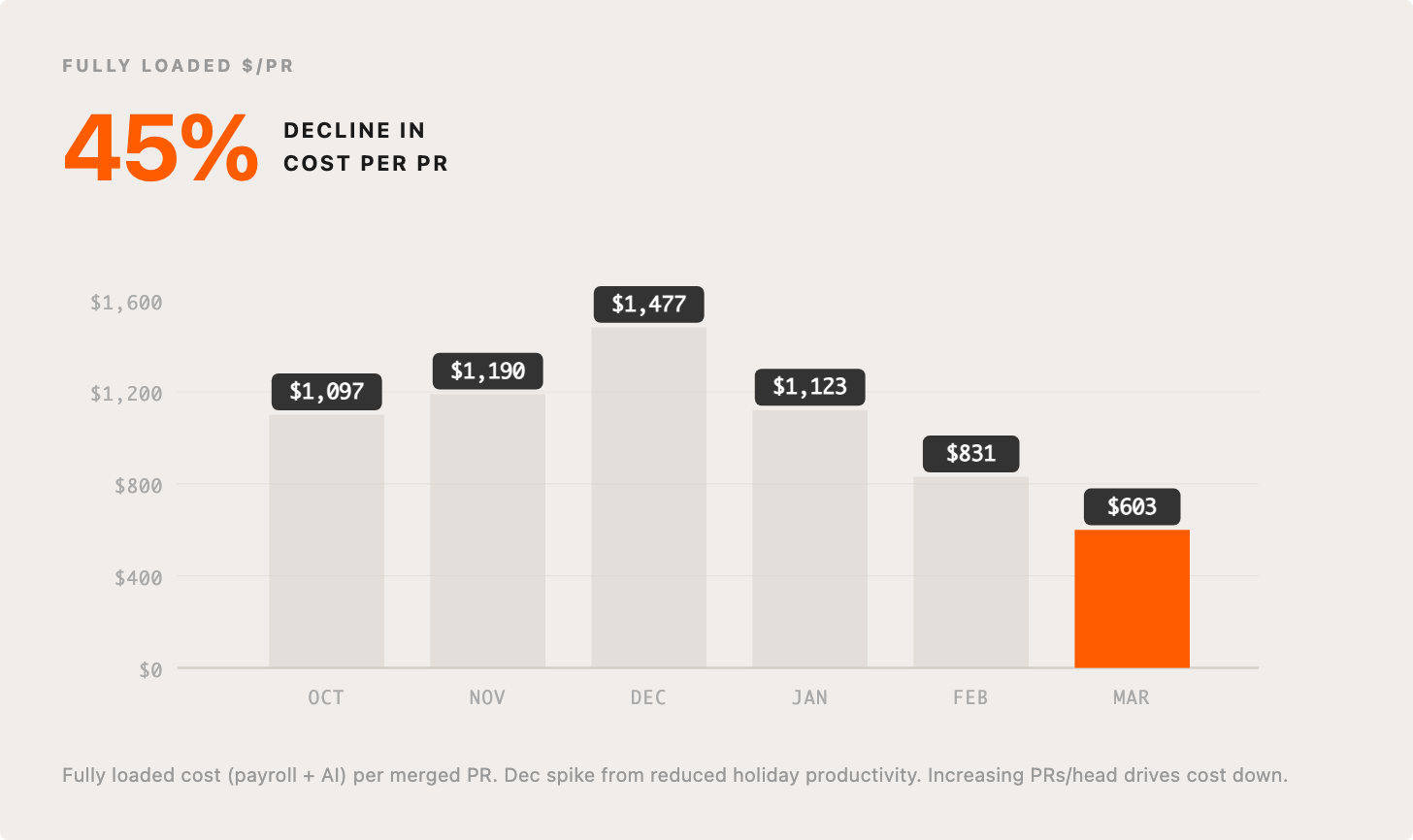

Brian highlights two metrics: quality of code and cost per PR.

Over the past few weeks, code quality at Intercom is going up, after an initial period of expected decline. Brian credits the auto-approval process for this as it encourages engineers to produce good code on the first pass. PRs that are small, single-purpose, use feature flags, and stay out of dangerous code paths get approved without a human reviewer. Kesha and Niamh recently wrote about this process.

When it comes to costs, Brian encourages companies to avoid becoming fixated on tokenspend. What matters more is the fully loaded cost of a PR including salaries, management overhead, review time, and rework. When you measure that, the economics of AI-assisted engineering look very different. “We have an awful and ever-growing bill,” he says, “and yet the cost per change is dropping. That’s exactly what you want.”

The interview also covers hiring for openness over experience, why committing to Claude Code is less of a risk than staying agnostic, and how org structure has changed.

Read the full interview here. Or Brian appeared on the On Rails podcast and the HangarDX podcast to talk about our use of Claude Code, so tune in if you want to go deeper.

Our final thought

When anyone can ship, the real difference maker is your point of view and your ability to communicate it to your AI. Getting great results requires great documentation, down to every detail.